Artificial intelligence in business is something of a nebulous topic at the moment.

Leaders are being told that they need AI in some capacity, but the how and why of implementation (if they're touched on at all) are obfuscated behind a dizzying array of technical jargon.

In his ElixirConf 2023 presentation titled Building AI Apps with Elixir, Charlie Holtz gives an overview of AI business applications using refreshingly simple language.

Importantly, he also focused on the uses of AI, rather than the specific implementation details:

[2:40] The way I've been thinking about AI [is] I'm not worrying about how it works or how to be a machine learning engineer or any of that. That's for other people to figure out. I want to think about all the ways I can use AI.

To that end, he addressed three types of applications:

- [2:40] "Magic" AI box

- [8:00]

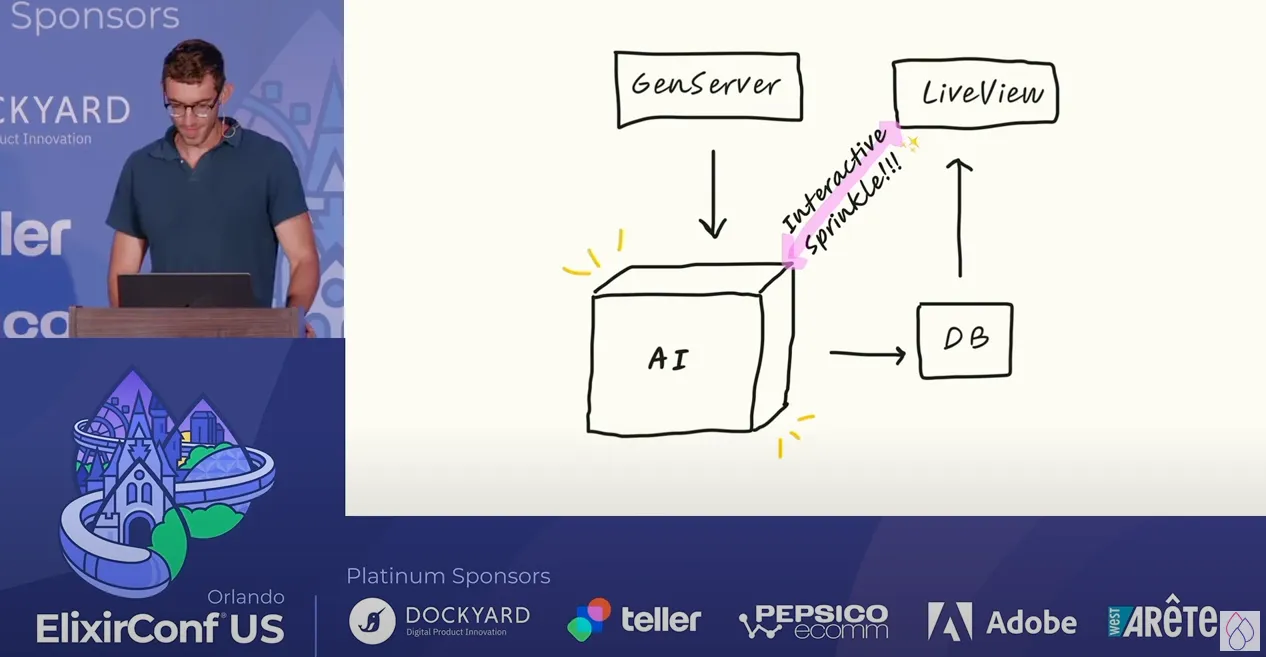

GenServerGenerator with "interactive sprinkle" (more explanation on this below) - [16:35] Agents

"Magic" AI Boxes

The "magic box" that Charlie refers to could be any sort of AI tool that takes an input and transforms it in an interesting or useful way.For most people, this is probably the most straightforward business application of artificial intelligence since there are already some well-known examples on the market.ChatGPT, for instance, accepts a written input and outputs text, while Stable Diffusion accepts a written prompt and outputs an image.

If you're looking for a more specific example, take a look at Quora's AI product called Poe, which accepts questions and provides answers.Charlie also points to PhotoAI.com and InteriorAI.com as examples of successful AI-focused products.That being said, it's unlikely that most companies will reposition their entire product around AI. It's far more likely that they will simply incorporate AI features into existing products to provide improved user experiences.Google Analytics (GA) provides a great example of this with its search bar.On your GA dashboard you have access to all sorts of data and reports via traditional UI elements like buttons, links, windows, and graphs. However, it's often quicker and easier to summarize your data by typing a question into the GA search bar.For example, you could calculate the number of visitors from organic search for the previous quarter by filtering your data and clicking through the dashboard user interface, or you could submit a question in the search field (e.g., "how many users visited my site last quarter via organic search?").This provides the best of both worlds: leveraging AI-powered search to provide rapid, useful insights while still retaining the traditional UI for more in-depth analysis.

GenServer Generator with "Interactive Sprinkles"

You don't need to understand what a GenServer is to grasp this concept (though, if you're curious, it's a special kind of Elixir process).

Charlie's main assertion is that, in some cases, you can use AI to generate data for your applications rather than manually adding/retrieving data from some external source.

In his example, he wanted to create a quiz for testing peoples' knowledge of meteorological aerodrome reports (METARs). Such an app would normally require a database of questions and answers, but Charlie decided to go another route:

[9:20] There are a few ways I could have populated that data. I could have done it by hand. I could have written a bunch of METARs by hand. I could have maybe found a weather API and pulled the METARs from there. But that would be tedious and not that fun, so what I decided to do was I used GPT-4 in this case to generate a METAR from scratch and then also generate a question about that METAR.

This particular use of GPT-4 - a specific type of AI model known as a large language model or LLM - could actually lead to a better user experience, because the questions aren't limited to the ones in your database.However, there's an important caveat to AI data generation that Charlie points out. He mentioned that he would "continually get feedback" from users that the METARs weren't quite correct.LLMs like ChatGPT are prone to outputting completely incorrect information. In the AI engineering world, these are known as hallucinations.So if you'd like to use AI for data generation, remember that you'll likely need to tweak your prompts to minimize hallucinations.You may have noted that up to this point we've only discussed the generation of questions with AI, but failed to describe how those questions are answered. Well, that's the "interactive sprinkle"!

For a given question, Charlie used GPT-3.5 to evaluate the user's answer as either correct or incorrect, then provided feedback on the answer.As he notes, this provides a "really nice user experience" since "it feels like there's a human on the other side that is determining whether your answer is correct or not".This strategy also has numerous uses outside of this class of application. For example, I could easily imagine using an LLM to moderate comments on a forum.Each comment could be evaluated as "good" or "bad", where "bad" comments could be automatically taken down or quarantined. You could additionally use the LLM to respond to these "bad" comments with helpful tips, warnings, clarifying questions, and so on.Using AI moderators would have numerous benefits, such as reducing overhead expense, mitigating human bias, and sheltering human moderators from potentially traumatic content.

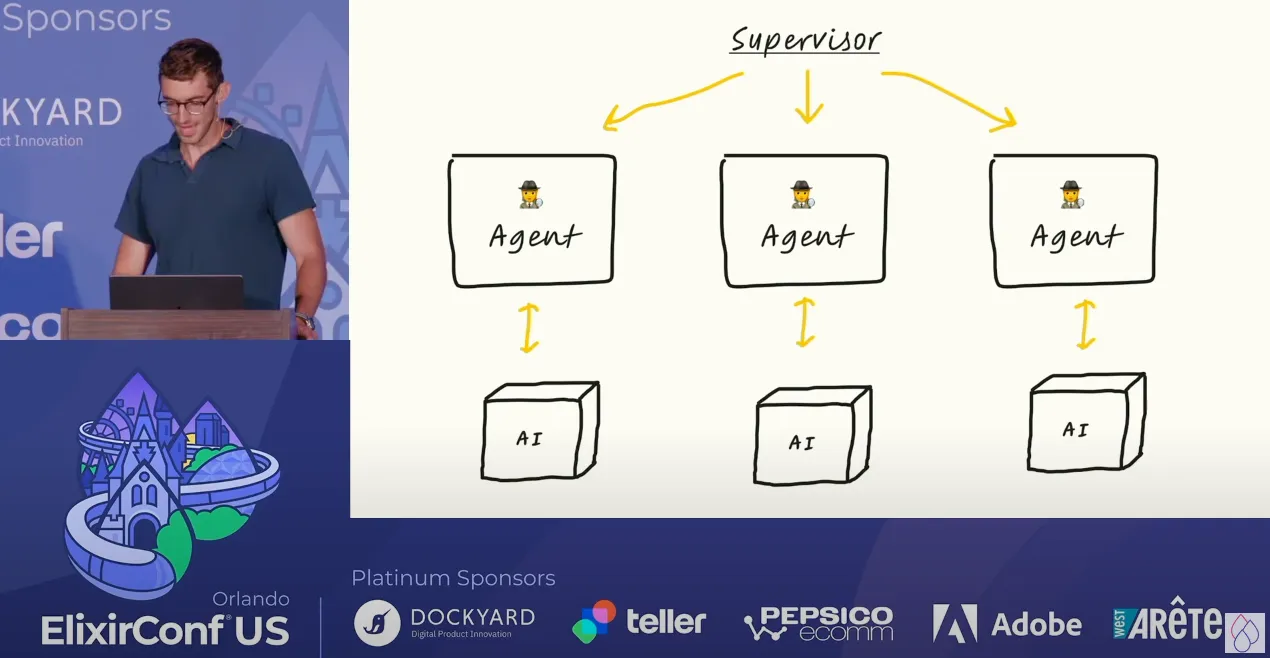

Agents

Of the three concepts presented, this is probably the most difficult to grasp. Firstly - what is a generative agent?For the sake of this conversation, let's define a generative agent as an artificial user in an application that behaves like a human user. Each agent's behavior is governed by AI, or as Charlie puts it, each generative agent "has a large language model serving as their brain".

While that's an interesting concept, what are the business applications of generative agents?

The article "Computational Agents Exhibit Believable Humanlike Behavior", an overview of the Stanford paper that Charlie references, suggests that these tools would be highly applicable in simulating outcomes.

For example, if you're developing a social media app, you could use generative agents to test the application prior to launching the app. This would give your team deeper insights into user behavior earlier in the development process, allowing them to better optimize the application before presenting it to real users.

Outside of direct app development, my immediate reaction is that generative agents could be used in employee training.

You could, for instance, create an "angry" agent and use it to train your customer service representatives to better handle such clients. You could also create agents that mimic the traits of your highest value customers, using those to teach your reps to better meet their needs.

Personally, I wonder if these tools could also be used for penetration testing. Companies could create "hacker" agents that attempt to phish employees or otherwise gain access to privileged resources, granting insight into cybersecurity weaknesses.

Agents with "AI brains" are a fairly fresh invention though, so only time will tell if any of these potential applications bear fruit.If you'd like to learn more about agents or any of the other topics discussed in this article, I'd highly recommend watching Charlie Holtz's full presentation.

In this article, we've discussed three broad approaches for using AI to solve business problems, as presented at ElixirConf 2023. We've also used those approaches as jumping-off points to discuss our own hypothetical business applications of AI.But we're still in the early innings of a technological shift that could have far-reaching implications for how we build software. So take a look at our other AI business applications posts if you're interested in learning more.

Learn more about how The Gnar builds software.

Mike is Co-Founder of The Gnar Company, a Boston-based software development agency where he leads project delivery for clients like Whoop, Kolide (acquired by 1Password), LevelUp (acquired by GrubHub), Qeepsake (feaured on Shark Tank), and AARP. With over a decade of experience building impactful software solutions for startups, SMBs, and enterprise clients, Mike brings an unconventional perspective having transitioned from professional lacrosse to software engineering, applying an athlete's mindset of obsessive preparation and relentless iteration to every project. As AI reshapes software development, Mike has become a leading practitioner of agentic development, leveraging the latest AI-assisted practices to deliver high-quality, production-ready code in a fraction of the time traditionally required.